Nature Knows and Psionic Success

God provides

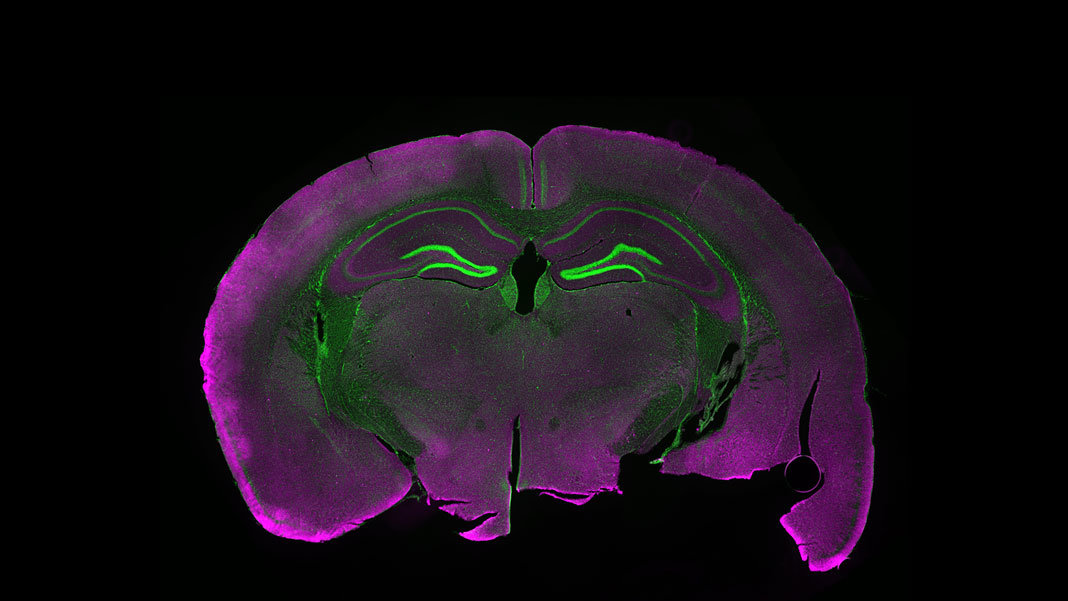

The Crucial Role of Brain Simulation in Future Neuroscience

“Do we have a chance of ever understanding brain function without brain simulations?” So asked the Human Brain Project (HBP), the brainchild of Henry Markram , in a new paper in the prestigious journal Neuron . The key, the team argued, is to consider brain simulators in the vein of calculus for Newton’s laws—not as specific ideas of how the brain works, but rather as a programming language that can execute many candidate neural models, or programs, now and in the future. When viewed not as a vanity project, but rather as the way forward to understand—and eventually imitate—higher brain functions, the response to brain simulation is a resounding yes . Because of the brain’s complexity and chaotic nature, the authors argue, rather than reining in simulation efforts, we need to ramp up and develop multiple “brain-simulation engines” with varying levels of detail. These “general purpose” simulators, when distributed globally on a cloud-based platform, will not just provide neuroscientists with an indispensable tool to test their hypotheses about various functions in the brain—how we make decisions, memories, and emotions. They may be the crux in finally linking the individual components of human intelligence, neurons, to functional neural circuits, and finally, to behavior or even consciousness—that is, how our experience of “self” emerges from seemingly random chattering in millions of neurons. First, Why Not? When Markram announced in 2009 that we would fully simulate the human brain in the next decade, he was either heralded as a visionary or condemned as a madman. Despite controversy , he subsequently skyrocketed to fame as the creator of the HBP, the European Union flagship aimed reconstructing entire brains as “digital replicas” capable of learning and memory, inside supercomputers. In other words, true AI. The project raised eyebrows from the start, both in its […]

Click here to view full article