Nature Knows and Psionic Success

God provides

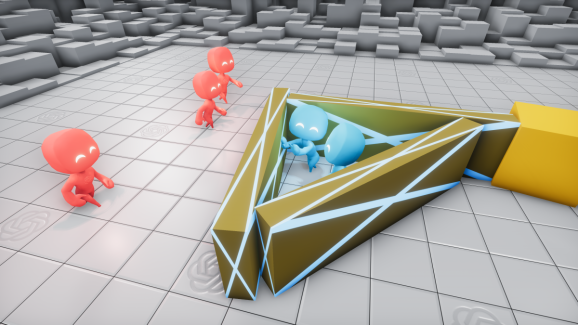

OpenAI and DeepMind teach AI teamwork by playing hide-and-seek

Above: OpenAI and DeepMind collaborated on AI that taught itself to play hide-and-seek. The age-old game of hide-and-seek can reveal a lot about how AI weighs decisions with which it’s faced, not to mention why it interacts the way it does with other AI within its sphere of influence — or its proximity. That’s the gist of a new paper published by researchers at Google parent company Alphabet subsidiary DeepMind and OpenAI, the San Francisco-based AI research firm that has backing from luminaries like LinkedIn cofounder Reid Hoffman. The paper describes how hordes of AI-controlled agents set loose in a virtual environment learned increasingly sophisticated ways to hide from and seek each other. Results from tests show that two-agent teams in competition self-improved at a rate faster than any single agent, which the coauthors say is an indication the forces at play could be harnessed and adapted to other AI domains to bolster efficiency. The hide-and-seek AI training environment, which was made available in open source today, joins the countless others OpenAI, DeepMind, and DeepMind sister company Google have contributed to crowdsource solutions to hard problems in AI. In December, OpenAI published CoinRun , which is designed to test the adaptability of reinforcement learning agents. More recently, it debuted Neural MMO , a massive reinforcement learning simulator that plops agents in the middle of an RPG-like world. For its part in June, Google’s Google Brain division open-sourced Research Football Environment , a 3D reinforcement learning simulator for training AI to master soccer. And DeepMind last month took the wraps off of OpenSpiel , a collection of AI training tools for video games. “Creating intelligent artificial agents that can solve a wide variety of complex human-relevant tasks has been a long-standing challenge in the artificial intelligence community,” wrote the coauthors […]

Click here to view full article