Nature Knows and Psionic Success

God provides

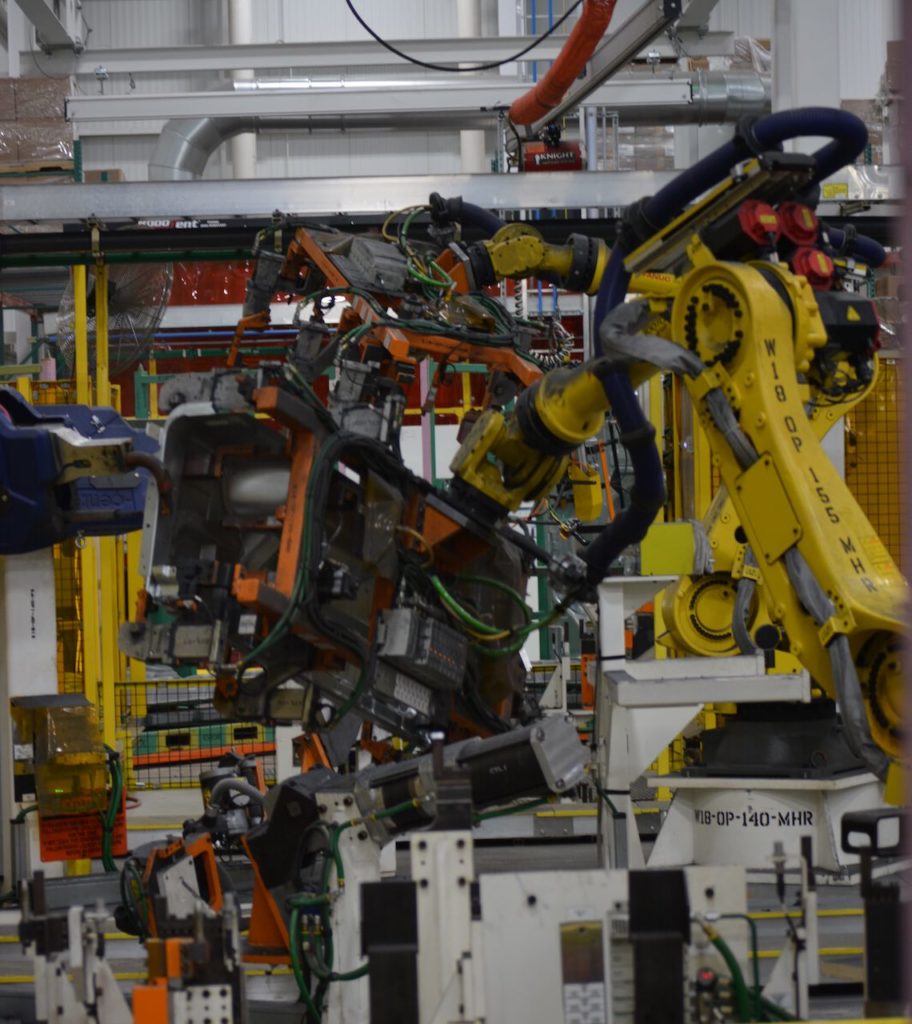

Using AI to help robots remember

Researchers at the University of Maryland have developed a method to combine perception and motor commands using the hyperdimensional computing theory, which could fundamentally alter and improve how robots translate what they sense into what they do through artificial intelligence (AI). Boston Red Sox star outfielder Mookie Betts steps up to the plate on a 3-2 count, studies the pitcher and the situation, gets the go-ahead from third base, tracks the ball’s release, swings… and gets a single up the middle. Just another trip to the plate for the reigning American League MVP. Betts has honed natural reflexes, years of experience, knowledge of the pitcher’s tendencies, and an understanding of the trajectories of various pitches. What he sees, hears, and feels seamlessly combines with his brain and muscle memory to time the swing that produces the hit. Now apply the knowledge Betts gleaned after years of experience and ask if a robot can do the same thing? The answer is no, not today. The robot would need to use a linkage system to slowly coordinate data from its sensors with its motor capabilities. And its memory is horrible. But that all may change with a method to combine perception and motor commands using the hyperdimensional computing theory, which could fundamentally alter and improve the basic artificial intelligence (AI) task of sensorimotor representation — how robots translate what they sense into what they do. “ Learning Sensorimotor Control with Neuromorphic Sensors: Toward Hyperdimensional Active Perception ” is a paper written by University of Maryland computer science Ph.D. students Anton Mitrokhin and Peter Sutor, Jr.; Cornelia Fermüller, an associate research scientist with the University of Maryland Institute for Advanced Computer Studies; and Computer Science Professor Yiannis Aloimonos. Mitrokhin and Sutor are advised by Aloimonos. Integration is key Integration is the most […]

Click here to view full article